Physics can predict the behavior of simple systems very accurately. For a ball tossed in the air, you can solve an equation that reliably tells the trajectory.

But most things that happen in the world have many causes and many effects, and the causes and effects interact. In order to make predictions, computer programmers write complicated equations that describe the way these different factors cause changes in all the other factors. Then they start with what we know of the system now, and try to predict the state of the system in the future.

Examples of computer models:

Computer models are used to predict stock market behavior. Fortunes have been made. Fortunes have been lost.

Computer models predict traffic flow, and traffic signals can be timed to avoid congestion.

Computer models are used to predict consumer behavior. Again, they have led to spectacular marketing successes and also some costly failures.

Successful computer models predict the weather, sometimes more than a week in advance.

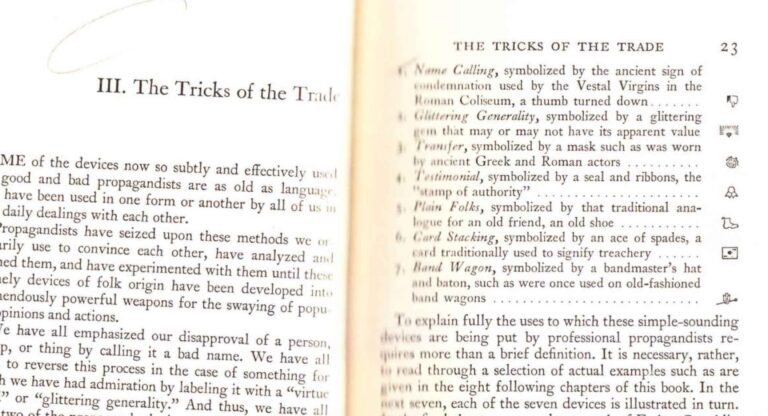

What are the pitfalls?

Computer models don’t produce understanding. They are based on a pre-existing understanding of how a system works. When the output matches the real world, that may be because that understanding was correct, or it may be because the modeler got lucky. But most often, it is because the modeler adjusts parameters in the model to make the results come out right. Because the systems are often very sensitive to multiple parameters, this often is quite easy to do.

It is easy to lie with computer models — easier, even than the better-known art of lying with statistics. [1] To understand someone else’s computer model takes more time than most scientists are willing to invest. And many people who are unfamiliar with the realities of computer modeling are impressed with their complexity and assume that anyone who programs thousands of lines of computer code must know what he or she is doing.

So promoting a computer model is usually a low-risk venture. I can claim that my computer model predicts some frightening future possibility and perhaps I can influence you to buy my product or follow my advice, in order to stave off that possibility.

What is it good for?

If you can program a computer model based on something in the real world, and the model behaves in a way that mimics its target, this tells you nothing. It’s much too easy to jiggle a few parameters in the model to make its behavior match any desired goal.

On the other hand, if you try and try to make your model’s output look like the real world and it refuses to match up, then you have really learned something. You can be confident that your model reflects a basic misunderstanding of how the world works.

The most successful models are refined by a trial-and-error process. Weather prediction is a good example. Weather forecasts have gotten better over time, not because we have a better understanding of the forces that shape the weather, but because we have been feeding the models with more than 50 years of weather data.

Examples of misuse

Computer models have been used to hype fear about global warming. Unlike weather prediction models, climate models have no training period in which they can be checked against reality and re-calibrated. (To do this would require detailed historic records of climate over tens of thousands of years.) The models in use today were trained during a decade when atmospheric CO2 was increasing and temperatures were rising. Unsurprisingly, they predict a strong positive correlation between CO2 and temperature. During the last seven years, CO2 continued to increase, but temperatures are no longer rising. The model output now conflicts with reality, yet we are expected to take the model’s dire predictions for the year 2050 seriously.

The world’s most notorious computer modeler is Neil Ferguson [2] of Imperial College, London. In 2020, his models predicting millions of COVID deaths were used to justify lockdowns, closures, and social distancing on both sides of the Atlantic. The model results proved to be wildly exaggerated. Before COVID, Ferguson was responsible for computer models that grossly exaggerated the danger of Mad Cow Disease and the 2009 Swine Flu. [3]

[1] https://www.psychologytoday.com/us/blog/am-i-right/202109/lies-damned-lies-and-statistics

[2] https://www.nature.com/articles/d41586-020-01003-6

[3] https://statmodeling.stat.columbia.edu/2020/05/08/so-the-real-scandal-is-why-did-anyone-ever-listen-to-this-guy/